Deep QC Should Run on Every Sequencing Dataset. That’s Why I Built Riker.

The case for rebuilding sequencing QC instead of carrying old performance costs forward

Quality control isn’t optional in sequencing.

If you generate a sequencing dataset, you should collect the metrics that tell you how that dataset was made, how it behaved, and whether anything subtle went wrong along the way. That should be standard practice, not a special step reserved for failed runs or high-priority projects.

The problem is that QC tooling has not kept up with current sequencing scale.

For years, Picard has been the default tool for sequencing metrics, and for good reason. It gave the field a common set of measurements and became part of a huge number of production workflows. I spent a lot of time working on it, and I know exactly why it became so widely used.

I also know where it started to fall behind.

Picard reflects an earlier era of sequencing. It is slower than modern pipelines need it to be, some of its assumptions deserve updating, and it has not evolved in step with the scale and complexity of current sequencing workflows. When a tool sits at the base of thousands of pipelines, those shortcomings stop being minor annoyances, and they become an accumulated tax on every dataset.

That is why I built Riker.

Riker is a modern successor to Picard for sequencing QC metrics. It is faster, cleaner, and built around the idea that metrics collection should happen on every dataset because the information is too useful and too cheap to ignore.

QC should do more than raise a flag

Most teams would agree that QC is important, but they probably don’t think as deeply about it as they could.

A lot of QC tooling is built to answer a narrow question: did this dataset clear a threshold or not? That is useful, but not enough. The more valuable question is often why the data looks the way it does.

That is why tools like Picard became so useful. Picard doesn’t just report a summary number. It helps explain where signal is being lost, what kind of bias is showing up, and which parts of the workflow are most likely responsible. Fantastic tools like mosdepth and perbase can give you mean coverage very quickly, which can tell you that something is off. A richer collector like CollectWgsMetrics can help tell you where the missing bases went and how to start fixing the problem.

The diagnostic value is a big part of why sequencing metrics matter. They are not just there to catch failed samples. They help you understand assay behavior, library quality, alignment artifacts, enrichment performance, and process drift over time. They are useful for immediate troubleshooting, but they are also useful for comparing projects, building quality systems, improving operations, and building better models of sample and pipeline performance.

Fast enough to run every time

Once you accept that QC should be run on every sequencing dataset, performance comes to the forefront of importance.

Older tooling made it too easy to compromise. When metrics take too long, people start trimming the workflow. They run fewer collectors, or reserve deeper QC for troubleshooting. Over time, that gives you an inconsistent picture of the data and makes it harder to catch process drift.

I wanted to remove that friction.

Riker is written in Rust because this kind of systems work benefits from speed, efficiency, and tighter control over performance. But the goal was never just to make Picard faster in a newer language. The real goal was to make routine metrics collection cheap enough that the right default becomes obvious: run in depth QC every time.

That only matters if the outputs deserve confidence, so I also went back through the metrics themselves. I fixed issues, cleaned up implementations, and updated calculations and assumptions where the older logic no longer matched current sequencing practice or current biological understanding.

Riker is faster, but it is also a chance to revisit what these metrics should be doing for people now.

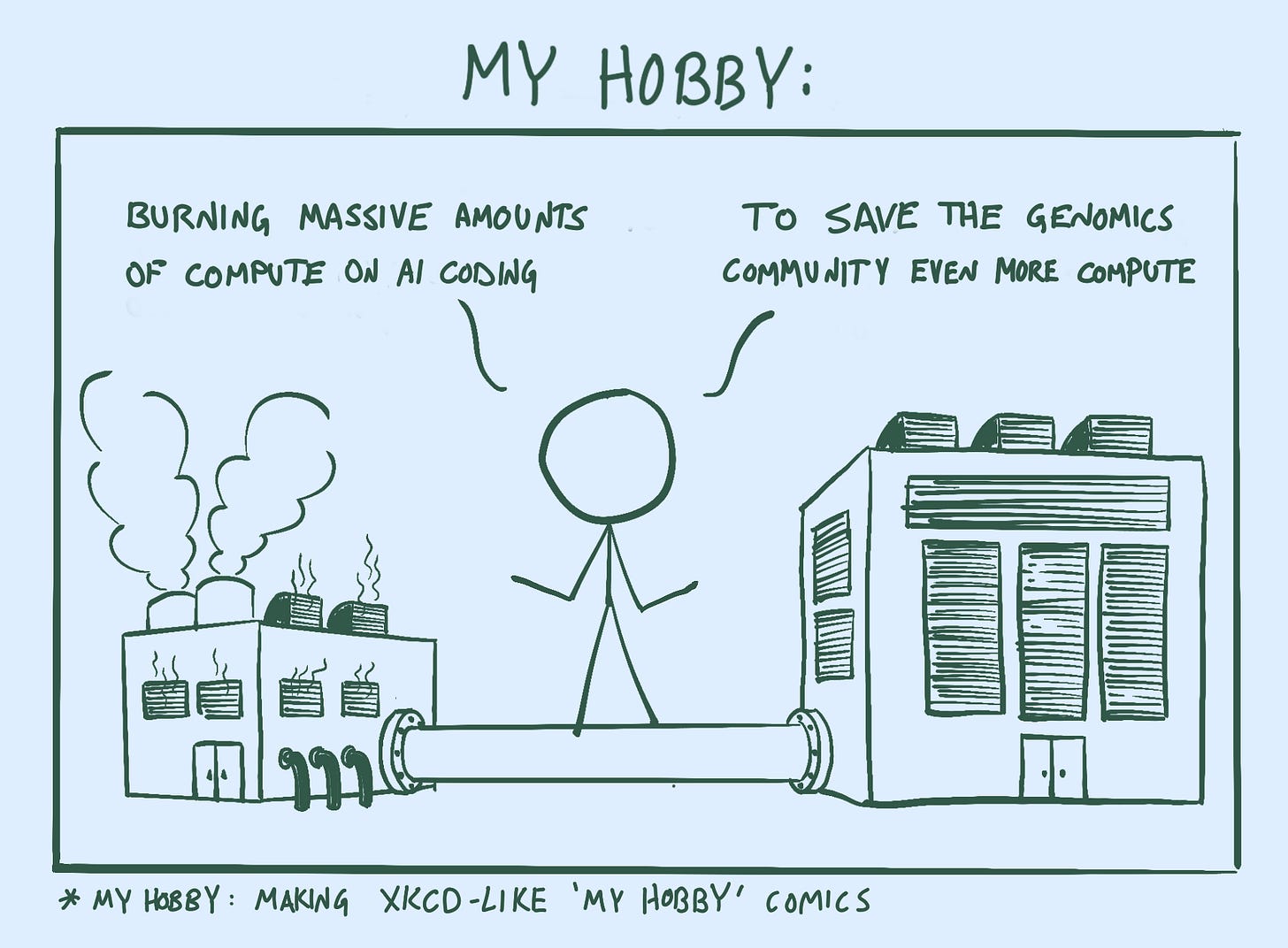

Why using AI here makes sense

There is a lot of justified scrutiny right now around the compute and energy cost of AI. These are real, worthwhile questions. A lot of energy is likely being spent on work that will be forgotten in a week.

But sequencing QC is a different category of problem.

Using AI to help build a foundational tool that will be run thousands or millions of times across real sequencing workflows is a productive use of compute. If that work produces software that is materially more efficient than what came before, the savings compound every time someone runs it. Every faster execution, every reduced resource requirement, every workflow that no longer carries legacy overhead adds up. Not to mention that catching QC problems sooner can massively reduce waste on the wetlab side.

I do not find the argument compelling that we should worry about the compute used to build better infrastructure while ignoring the repeated waste of running slower, aging infrastructure at scale. If a focused use of AI helps produce software that saves far more compute over its lifetime than it consumes during development, that is a trade worth making.

Riker clears that bar.

There is no excuse to skip in depth sequencing QC

Sequencing QC metrics are useful, signal rich, and cheap enough to run routinely when the tooling is built for it. They help you understand not just whether something went wrong, but why. They support troubleshooting, operations, and machine learning. And they become more valuable when they are collected consistently across every dataset instead of only when someone suspects a problem.

That is the standard behind Riker.

If you generate sequencing data, you should be collecting rich QC metrics on every dataset. The information is too useful, and the cost of collecting it is now too low, to justify treating it as optional.

🔗 https://github.com/fulcrumgenomics/riker

Fulcrum Genomics is a bioinformatics consulting firm built by scientists at the forefront of large-scale genomic research, with deep expertise in sequencing technology, pipeline engineering, and genomic data analysis for biotech, pharma, and academia. Engage us through project-based work, fractional R&D, or hourly consulting. Contact us to discuss your project.